Yi-Chi Tsaia and Tsung-Nan Choub* aDepartment of Computer Science and Information Engineering, Chaoyang University of Technology, Taichung, 413, Taiwan, R.O.C.

bDepartment of Finance, Chaoyang University of Technology, Taichung, 413, Taiwan, R.O.C.

Download Citation:

|

Download PDF

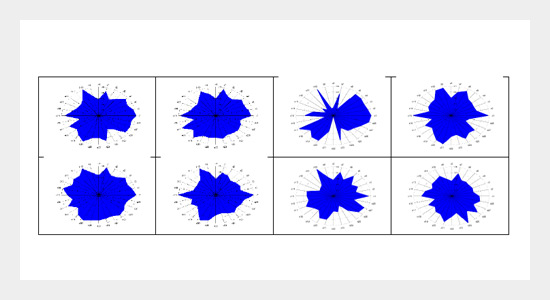

In recent years, small businesses and companies across industries have confronted the challenges of big data analysis since a huge amount of time series and cross-sectional data are collected daily from their business activities. These data might require dimensionality reduction to reduce the complexity of data analysis. This study used two special feature patterns including polar and contour graphs to represent the mutual relationship of the Asian stock markets, and geometric and invariant measures were applied to replace the original variables with an attempt to reduce the dimensionality of input variables.ABSTRACT

Moreover, several feature selection and extraction methods were also implemented with predictive models in comparison to the effectiveness of proposed feature patterns. Three conventional machine-learning approaches were employed as benchmark models to predict the daily change of Taiwan stock market index based on the transformed graphs. In addition, the predictive performance of another three deep learning approaches including stacked autoencoder, deep neural network, and convolution neural network were evaluated with different network structures and feature selection strategies. Based on organizing the input variables as multivariate time series and two-dimensional encoded images, the experiment results showed that the deep learning models outperformed other machine learning models. Especially, the predictive accuracy of the CNN model with 1D convolution improved the predictive accuracy to 0.67 with a precision of 0.51 and a recall of 0.55, respectively. The results also suggested that the transformation of two-dimensional polar and contour graphs might provide abstract features for the deep learning approaches to explore the historical patterns. As the CNN models with 1D and 2D convolution performed better than the rest of deep learning models, the further studies might be needed to investigate how both models complement each other to create synergies.

Keywords:

Deep learning; dimensionality reduction; polar graph; contour mapping.

Share this article with your colleagues

REFERENCES

ARTICLE INFORMATION

Received:

2017-11-01

Revised:

2018-07-04

Accepted:

2018-07-05

Available Online:

2018-12-01

Tsai, Y.C., Chou, T.N. 2018. Deep learning applications in pattern analysis based on polar graph and contour mapping. International Journal of Applied Science and Engineering, 15, 183-198. https://doi.org/10.6703/IJASE.201812_15(3).183

Cite this article: