Ricardo Catanghal Jr* College of Computer Studies, University of Antique, Antique, Philippines

Download Citation:

|

Download PDF

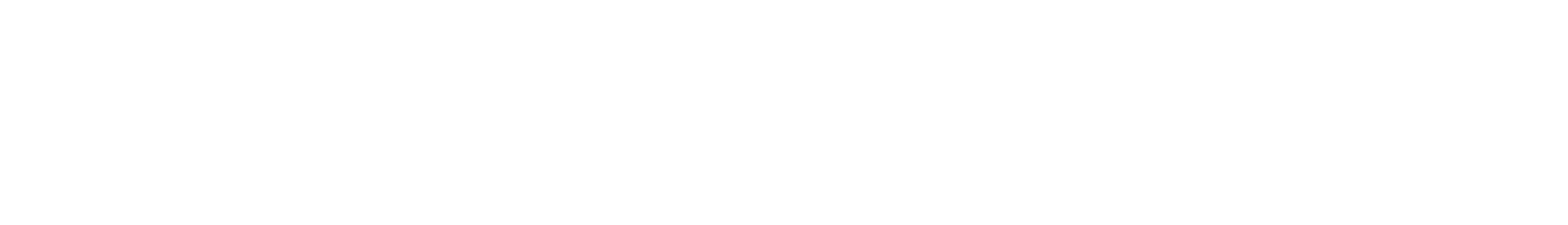

In the field of sound recognition, the research and study of sound event detection are still active, with the vast majority of the papers focusing mostly on the domain of speech and music. This paper presents and discusses a framework for a study room event detection system. Feature extraction techniques are utilized and discussed to obtain the parametric type representation for the analysis of the sound for intelligent homes machine listening systems specifically for study room. The conduct of sound analysis within the category of the sounds the least accurate was the door knock, but the accuracy of 95.00%, currently in the field is acknowledged as good, making the parameters fit for detecting surrounding sounds. The performance of the CNN in detecting environmental sounds was analyzed using the parameters that were defined, with an overall accuracy of 96.8%. The result was promising for machine learning that detects sounds that can be applied as technology for an innovative learning environment.ABSTRACT

Keywords:

Smart homes study room, Innovative learning technologies, Machine learning, Artificial neural network (ANN).

Share this article with your colleagues

REFERENCES

ARTICLE INFORMATION

Received:

2021-02-01

Revised:

2021-04-11

Accepted:

2021-05-11

Available Online:

2021-06-21

Catanghal Jr, R. 2021. Sound detection for study room monitoring and evaluation. International Journal of Applied Science and Engineering, 18, 2021040. https://doi.org/10.6703/IJASE.202106_18(4).005

Cite this article:

Copyright The Author(s). This is an open access article distributed under the terms of the Creative Commons Attribution License (CC BY 4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are cited.